Abstract

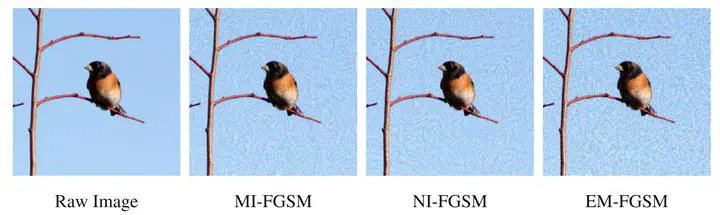

Adversarial examples (AEs) are malicious test-data samples (typically images) generated by applying carefully calculated perturbations to clean samples. The added perturbations are usually human-imperceptible but the AEs can fool a machine learning (ML) model to make misclassifications. Although multiple methods were proposed to generate AEs, the ability to generalize is very limited; that is, they easily overfit to their source, single, white-box ML models and the generated AEs rarely work for other models. In this paper, we propose a black-box attack approach that crafts transferable AEs that can attack a wide range of ML models without knowing those model details. Our novel method consists of an elastic momentum (EM) that expedites gradient descent to avoid early overfitting, and a random erasure (RE) technique that increases the diversity of perturbations and reduces gradient fluctuations. Our method can be applied to any gradient-based attacks to make those attacks become more transferable. We evaluate our proposed method by attacking seven state-of-the-art (SOTA) deep learning models and comparing against five SOTA attacks; we also attack nine advanced defense mechanisms that are integrated into the above models. Our results demonstrate significant improvement on the attack success rate (ASR) and transferability when using our method alone, and that it can also be easily applied to other baseline methods (which are gradient-based) to substantially improve their performance.